AI-First UX & Workflow Transformation Sample

Designing an AI-enabled operating model that moved teams from fragmented decision-making to scalable, system-level acceleration.

Executive Summary

In this sample case study, enterprise teams were under pressure to move faster with AI—but fragmented decision-making and inconsistent UX slowed delivery. I led the transition to an AI-first operating model, embedding decision-making into systems, patterns, and governance. This turned AI from a feature into infrastructure—enabling faster decisions, greater reuse, and consistent execution at scale.

The result: accelerated delivery with reduced rework and zero compromise on trust or quality.

Role, Scope, Impact

Led AI UX transformation across enterprise platforms

Role

Scope

Defined AI-first operating model, design system integration, and governance across product, design, and engineering

Designers, Product Managers, Engineers (primary)

End users across multiple surfaces and workflows (secondary)

Users

Scale

4 enterprise surfaces

20+ workflows

Global product teams

Impact

The impact wasn't just about using AI for the sake of it; it was about enabling faster decision making by enabling our organization with the right tools, frameworks and context, with sufficient agency to keep role specific judgement in service of building better products.

100% AI UX shipped via Design Systems

Zero missed roll back of AI Experiences due to trust

60 - 70% Faster Desicon Making Cycles

The Problem

Ambiguity bottlenecks

Designers were spending significant time reverse-engineering intent from incomplete or low-signal inputs. Traditional long-form docs (PRDs/PRFAQs) were too slow to keep up with monthly release expectations.

Outdated execution habits

AI introduced risk, not leverage

Even with AI tools available, teams were stuck in legacy UX practices that assumed stable requirements and long cycles—creating tension between “move fast” demands and quality expectations.

Without patterns, governance hooks, and consistent contracts, AI features risked being fragmented, inconsistent, and unsafe—especially in enterprise workflows where trust is everything.

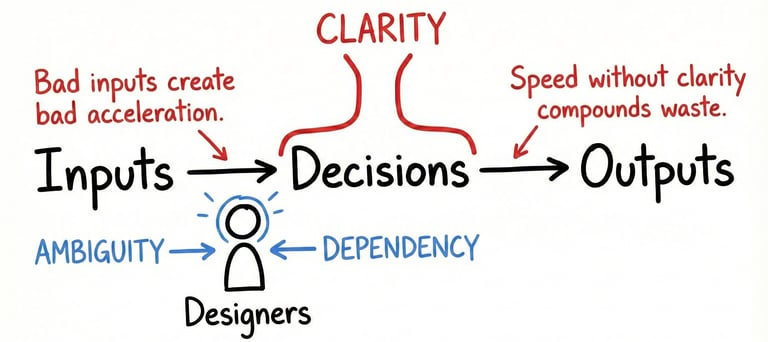

Core insight: AI would not help unless we redesigned how decisions are made, not just how screens are produced.

We had 3 compounding issues:

1

2

3

The Shift

We re-framed AI-first UX from a feature-led initiative into a system-level intervention. Rather than asking where AI could be added, we asked a harder question: what had to change in how work moved through the organization for AI to actually create leverage?

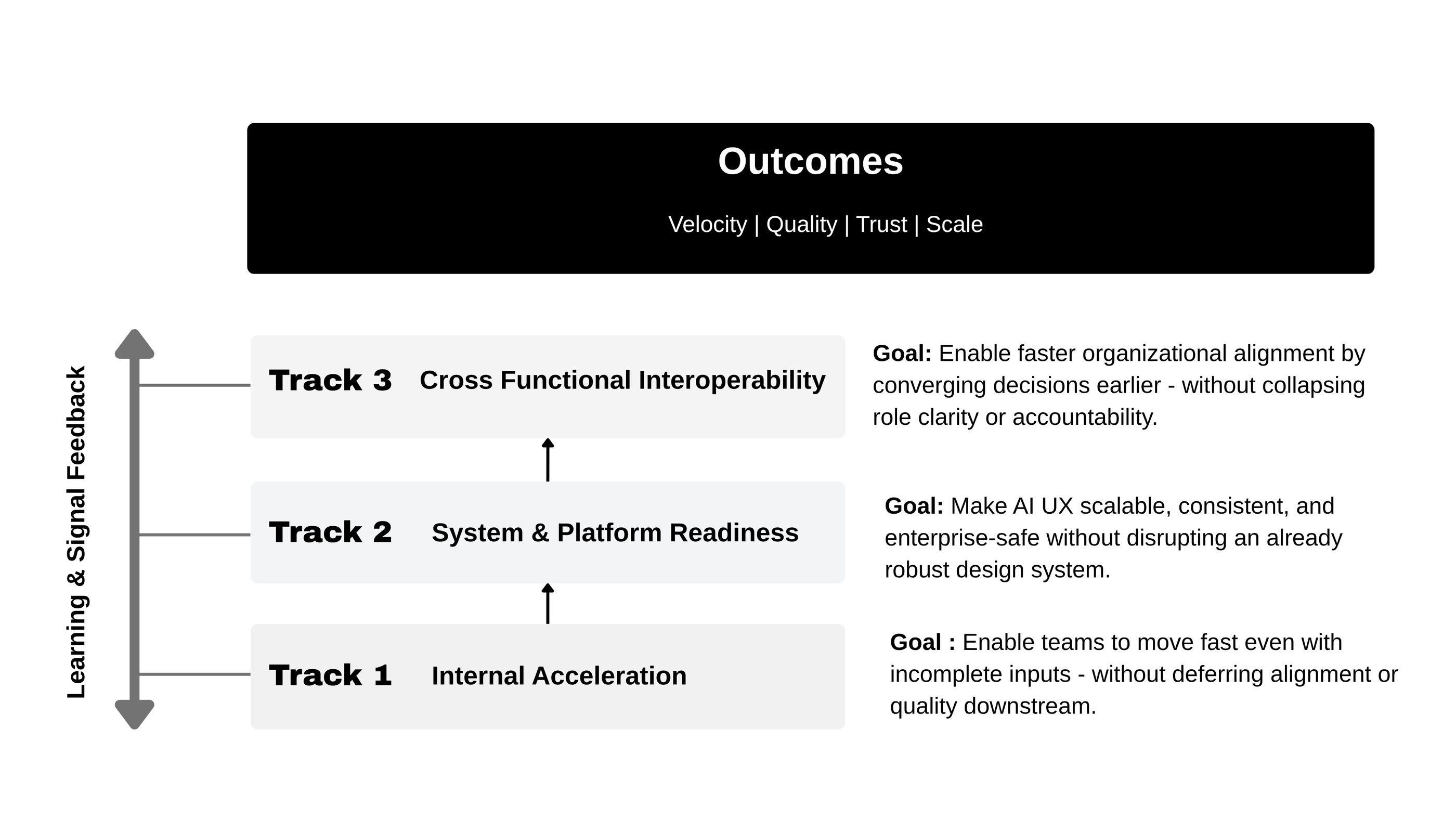

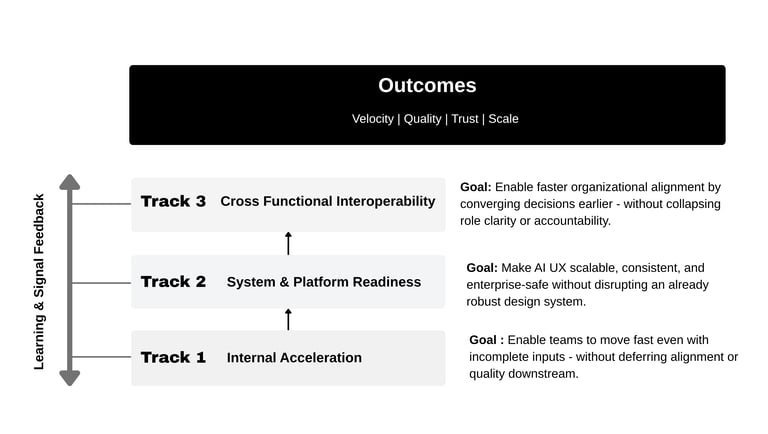

The answer wasn’t a single solution. It was a coordinated shift across three interconnected acceleration tracks—each addressing a different failure mode of speed at scale.

Together, these tracks transformed AI from a set of tools into an operating system for decision-making—accelerating teams without fragmenting the platform, compromising trust, or collapsing accountability.

What We Put In Place

We established a framework of processes that allowed the organization to

Accelerate exploration

Embed AI safely across the product experiences through design systems through

An established AI UX pattern taxonomy

AI component level anatomy

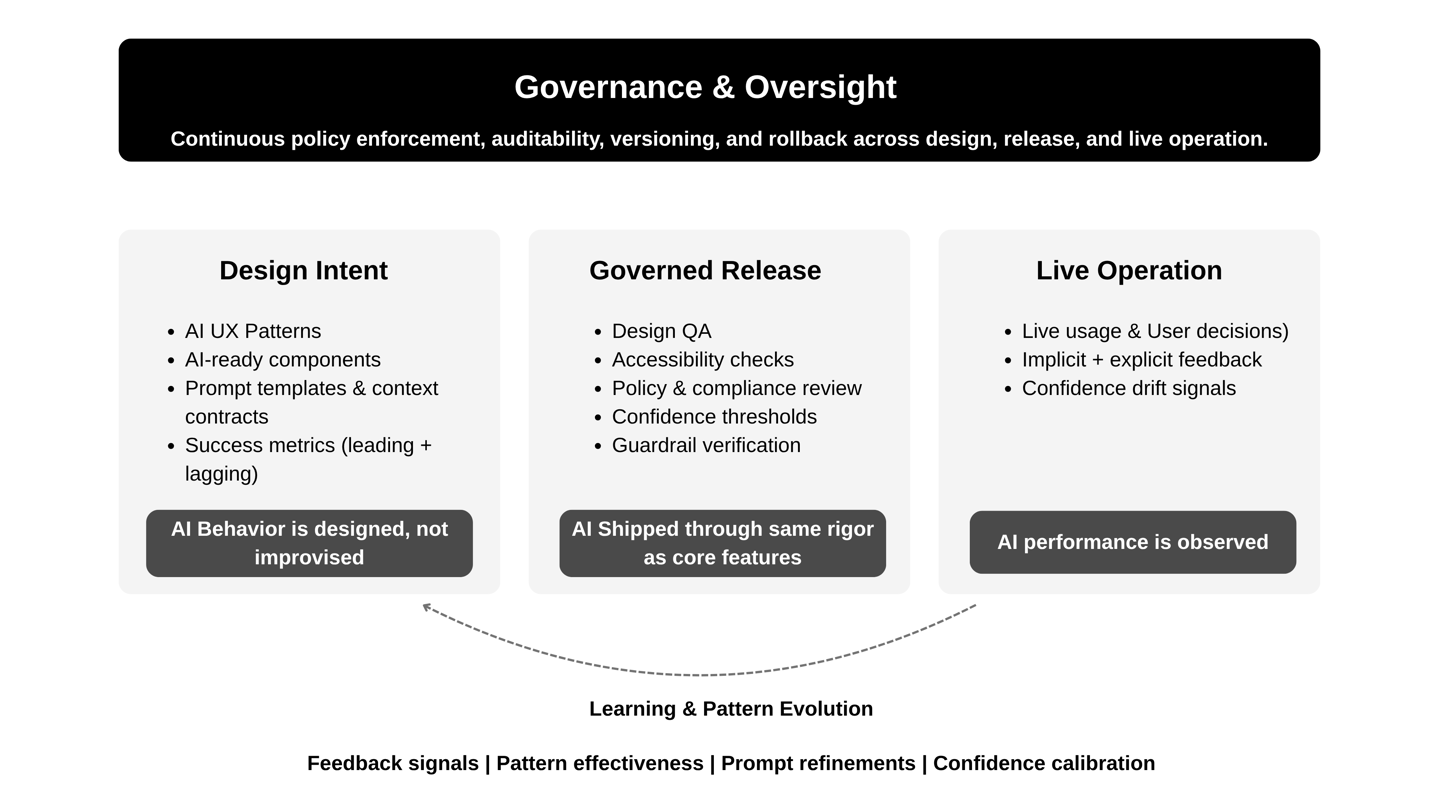

Governance and oversight so AI stays safe at scale

Make more confident decisions through a commitment decision operating model

While the patterns, acceleration frameworks and design system integration was key - it was the overarching governance and oversight model that allowed us to ensure that AI was mapped where it was making or influencing decisions across customer, employee, and system layers. Then identify where those decisions lack visibility, control, or consistency.

The allowed us to confidently design a unified decisioning framework - not just assistants - where AI outputs are explainable, actions are gated by risk, and humans are integrated intentionally. Over time, this becomes less about interfaces and more about orchestrating intelligence our experiences.

Outcomes: What Changed at the System Level

Measurable gains in speed, decision quality, and organizational scalability — without sacrificing trust or governance.

Decision Velocity & Delivery

Quality, Trust & Risk Control

Organizational Scale

~60-70%

Faster Decision Cycles

Reduction in late-stage reversals

~30–40%

AI-related release rollbacks across governed surfaces

Enterprise-safe AI delivery with zero critical regressions

ZERO

100%

AI UX shipped through design system governance

Fallbacks, explainability, and human override by default

Designed once, reused everywhere

Earlier design commitment through decision-grade artifacts

No team-specific AI UX decisions required

Core enterprise surfaces reused shared AI UX patterns

4

20+

workflows shipped via common contracts

What This Enabled (Strategic Impact)

This transformation created durable leverage beyond individual features or teams.

A repeatable AI operating model where new capabilities could be introduced safely and consistently, without re-litigating trust, UX quality, or governance each time.

Earlier, higher-confidence decisions across Product, Design, and Engineering — shifting commitment upstream and reducing downstream churn under speed pressure.

A platform mindset for AI, moving the organization from “AI features” to AI as a system accelerator across multiple enterprise surfaces.

Most importantly, it delivered a concrete answer to the executive mandate:

“Go faster with AI — without sacrificing enterprise requirements, coherence, or trust.”

What I’d Do Next

With the operating model in place, the next evolution would focus on precision, not speed:

Stronger confidence instrumentation — making confidence, drift, and override patterns measurable signals, not inferred behavior.

Deeper automated validation for AI UX patterns, reducing manual review while preserving safety and accessibility guarantees.

Tighter linkage between decision artifacts and roadmap execution, closing the loop from intent → commitment → delivery outcomes.